Posts Tagged neuroscience

Another Haiku Detector Update, and Some Observations on Mac Speech Synthesis

Posted by Angela Brett in Haiku Detector on May 18, 2015

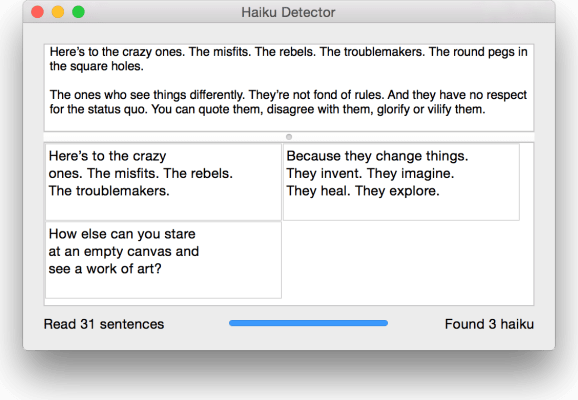

I subjected Haiku Detector to some serious stress-testing with a 29MB text file (that’s 671481 sentences, containing 16810 haiku, of which some are intentional) a few days ago, and kept finding more things that needed fixing or could do with improvement. A few days in a nerdsniped daze later, I have a new version, and some interesting tidbits about the way Mac speech synthesis pronounces things. Here’s some of what I did:

- Tweaked the user interface a bit, partly to improve responsiveness after 10000 or so haiku have been found.

- Made the list of haiku stay scrolled to the bottom so you can see the new ones as they’re found.

- Added a progress bar instead of the spinner that was there before.

- Fixed a memory issue.

- Changed a setting so it should work in Mac OS X 10.6, as I said here it would, but I didn’t have a 10.6 system to test it on, and it turns out it does not run on one. I think 10.7 (Lion) is the lowest version it will run on.

- Added some example text on startup so that it’s easier to know what to do.

- Made it a Developer ID signed application, because now that I have a bit more time to do Mac development (since I don’t have a day job; would you like to hire me?), it was worth signing up to the paid Mac Developer Program again. Once I get an icon for Haiku Detector, I’ll put it on the app store.

- Fixed a few bugs and made a few other changes relating to how syllables are counted, which lines certain punctuation goes on, and which things are counted as haiku.

That last item is more difficult than you’d think, because the Mac speech synthesis engine (which I use to count syllables for Haiku Detector) is very clever, and pronounces words differently depending on context and punctuation. Going through words until the right number of syllables for a given line of the haiku are reached can produce different results depending on which punctuation you keep, and a sentence or group of sentences which is pronounced with 17 syllables as a whole might not have words in it which add up to 17 syllables, or it might, but only if you keep a given punctuation mark at the start of one line or the end of the previous. There are therefore many cases where the speech synthesis says the syllable count of each line is wrong but the sum of the words is correct, or vice versa, and I had to make some decisions on which of those to keep. I’ve made better decisions in this version than the last one, but I may well change things in the next version if it gives better results.

Here are some interesting examples of words which are pronounced differently depending on punctuation or context:

| ooohh | Pronounced with one syllable, as you would expect |

| ooohh. | Pronounced with one syllable, as you would expect |

| ooohh.. | Spelled out (Oh oh oh aitch aitch) |

| ooohh… | Pronounced with one syllable, as you would expect |

| H H | Pronounced aitch aitch |

| H H H | Pronounced aitch aitch aitch |

| H H H H H H H H | Pronounced aitch aitch aitch |

| Da-da-de-de-da | Pronounced with five syllables, roughly as you would expect |

| Da-da-de-de-da- | Pronounced dee-ay-dash-di-dash-di-dash-di-dash-di-dash. The dashes are pronounced for anything with hyphens in it that also ends in a hyphen, despite the fact that when splitting Da-da-de-de-da-de-da-de-da-de-da-de-da-da-de-da-da into a haiku, it’s correct punctuation to leave the hyphen at the end of the line:

Da-da-de-de-da- Though in a different context, where – is a minus sign, and meant to be pronounced, it might need to go at the start of the next line. Greater-than and less-than signs have the same ambiguity, as they are not pronounced when they surround a single word as in an html tag, but are if they are unmatched or surround multiple words separated by spaces. Incidentally, surrounding da-da in angle brackets causes the dash to be pronounced where it otherwise wouldn’t be. |

| U.S or u.s | Pronounced you dot es (this way, domain names such as angelastic.com are pronounced correctly.) |

| U.S. or u.s. | Pronounced you es |

| US | Pronounced you es, unless in a capitalised sentence such as ‘TAKE US AWAY’, where it’s pronounced ‘us’ |

I also discovered what I’m pretty sure is a bug, and I’ve reported it to Apple. If two carriage returns (not newlines) are followed by any integer, then a dot, then a space, the number is pronounced ‘zero’ no matter what it is. You can try it with this file; download the file, open it in TextEdit, select the entire text of the file, then go to the Edit menu, Speech submenu, and choose ‘Start Speaking’. Quite a few haiku were missed or spuriously found due to that bug, but I happened to find it when trimming out harmless whitespace.

Apart from that bug, it’s all very clever. Note how even without the correct punctuation, it pronounces the ‘dr’s and ‘st’s in this sentence correctly:

the dr who lives on rodeo dr who is better than the dr I met on the st john’s st turnpike

However, it pronounces the second ‘st’ as ‘saint’ in the following:

the dr who lives on rodeo dr who is better than the dr I met in the st john’s st john

This is not just because it knows there is a saint called John; strangely enough, it also gets this one wrong:

the dr who lives on rodeo dr who is better than the dr I met in the st john’s st park

I could play with this all day, or all night, and indeed I have for the last couple of days, but now it’s your turn. Download the new Haiku Detector and paste your favourite novels, theses, holy texts or discussion threads into it.

If you don’t have a Mac, you’ll have to make do with a few more haiku from the New Scientist special issue on the brain which I mentioned in the last post:

Being a baby

is like paying attention

with most of our brain.

But that doesn’t mean

there isn’t a sex difference

in the brain,” he says.

They may even be

a different kind of cell that

just looks similar.

It is easy to

see how the mind and the brain

became equated.

We like to think of

ourselves as rational and

logical creatures.

It didn’t seem to

matter that the content of

these dreams was obtuse.

I’d like to thank the people of the xkcd Time discussion thread for writing so much in so many strange ways, and especially Sciscitor for exporting the entire thread as text. It was the test data set that kept on giving.

Eight of Spades: The Synaesthetist

Posted by Angela Brett in Birds of Canada, Schmetterlinge, Writing Cards and Letters on April 29, 2012

I’ve mentioned before that I have grapheme-colour synaesthesia. That means that I intuitively associate each letter or number with a colour. The colours have stayed the same throughout my life, as far as I remember, and they are not all the same colours that other grapheme-colour synaesthetes (such as my father and brother) associate with the same letters. I still see text written in whichever colour it’s written in, but in my mind it has other colours too. If I have to remember the number of a bus line, there’s a chance I’ll remember the number that goes with the colour it was written in rather than the correct letter, or I’ll remember the correct letter and look in vain for a bus with a number written in that colour.

I’ve mentioned before that I have grapheme-colour synaesthesia. That means that I intuitively associate each letter or number with a colour. The colours have stayed the same throughout my life, as far as I remember, and they are not all the same colours that other grapheme-colour synaesthetes (such as my father and brother) associate with the same letters. I still see text written in whichever colour it’s written in, but in my mind it has other colours too. If I have to remember the number of a bus line, there’s a chance I’ll remember the number that goes with the colour it was written in rather than the correct letter, or I’ll remember the correct letter and look in vain for a bus with a number written in that colour.

Well, I’ve been wondering whether it could work the other way.

- Could grapheme-colour synaesthetes learn to look at a sequence of colours that correspond to letters in their synaesthesia, and read a word?

- Could this be used to send code messages that only a single synaesthete can easily read?

- Could colours be used to help grapheme-colour synaesthetes learn to read a new alphabet, either one constructed for the purposes of secret communication, or a real script they will be able to use for something?

- What would be the difference in learning time for a grapheme-colour synaesthete using their own colours for the replacement graphemes, a grapheme-colour synaesthete using random colours, and a non-synaesthete?

I know that for me, there are quite a few letters with similar colours, and a few that are black or white, so reading a novel code wouldn’t be infallible, but I suspect I would be able to learn a new alphabet a little more easily or read it more naturally if it were presented in the ‘right’ colours. I wonder whether the reason the Japanese symbol for ‘ka’ seemed so natural and right to me was that it seemed to be the same colour as the letter k.

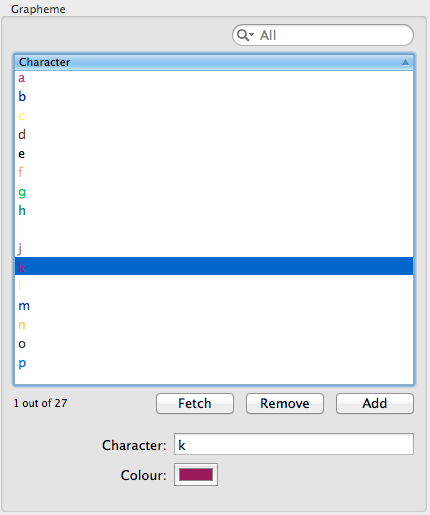

It occurred to me that, as a programmer and a grapheme-colour synaesthete, I could test these ideas, or at least come up with some tools that scientists working in this area could use to test them. So I wrote a little Mac program called Synaesthetist. You can download it from here. In it, you choose the colours that you associate with different letters (or just make up some if you don’t have grapheme-colour synaesthesia and you want to know what it’s like) and save them to a file.

Then you can type in some text, and you’ll see the text with the letters in the right colours, like so:

But even though this sample is using the ‘right’ colours for the letters, it still looks all wrong to me. When I think of a word, usually the colour of the word is dominated by the first letter. So I added another view with a slider, where you can choose how much the first letter of a word influences the colours of the rest of the letters in the word.

This shows reasonably well what words are like for me, but sometimes the mix of colours doesn’t really resemble either original colour. It occurred to me that an even better representation would be to have the letters in their own colours, but outlined in the colour of the first letter. So I added that:

Okay, so that gives you some idea of what the words look like in my head. And maybe feeding text through this could help me to memorise it. Here’s an rtf file of the lyrics to Mike Phirman‘s song ‘Chicken Monkey Duck‘ in ‘my’ colours, with initial letter outline. I’ll study these and let you know it it helps me to memorise them. To be scientific about it, I really should recruit another synaesthete (who would have different colours from my own, and so might be hindered by my colours) and a non-synaesthete to try it as well, and define exactly how much it should be studied and how to measure success. But I’m writing a blog, not running a study, so if you want to try it, download the file. (I’d love it if somebody did run a study to answer some of my questions, though. I’d add whatever features were necessary to the app.)

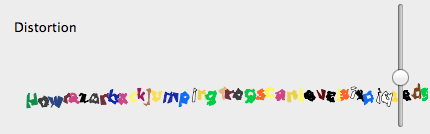

But these functions don’t go too far in answering the questions I asked earlier. How about reading a code? Well, I figured I’d be more likely to intuit letters from coloured things if they looked a little bit like letters: squiggles rather than blobs. So first I added a view that simply distorts the letters randomly by an amount that you can control with the slider. I did this fairly quickly, so there are no spaces or word-wrapping yet.

I can’t read it when it gets too distorted, but perhaps it’s easier to read at low-distortion than it would be if the letters were all black. Maybe I’d be able to learn to ‘read’ the distorted squiggles based on colour alone, but I doubt it. This randomly distorts the letters every time you change the distortion amount of change the text, and it doesn’t keep the same form for each occurrence of the same letter. Maybe if it did, I’d be able to learn and read the new graphemes more easily than a non-synaesthete would. Okay, how about just switching to a font that uses a fictional alphabet? Here’s some text in a Klingon font I found:

I know that Klingon is its own language, and you can’t just write English words in Klingon symbols and call it Klingon. But the Futurama alien language fonts I found didn’t work, and Interlac is too hollow to show much colour.

Anyhow, maybe with practice I’ll be able to read that ‘Klingon’ easily. I certainly can’t read it fluently, but even having never looked at a table showing the correspondence between letters and symbols, I can figure out some words if I think about it, even when I copy some random text without looking. I intend to add a button to fetch random text from the web, and hide the plain text version, to allow testing of reading things that the synaesthete has never seen before, but I didn’t have time for that.

Another thing I’ll probably do is add a display of the Japanese kana syllabaries using the consonant colour as the outline and the vowel colour as the fill.

Here’s a screenshot of the whole app:

As I mentioned, you can download it and try it for yourself. It works on Mac OS X 10.7, and maybe earlier versions too. To use it, either open my own colour file (which is included with the download) or create a new document and add some characters and colours in the top left. Then enter some text on the bottom left, and it will appear in all the boxes on the right side. If you change the font in the bottom left, say to a Klingon font, it will change in all the other displays except the distorted one.

This is something I’ve coded fairly hastily on the occasional train trip or weekend, usually forgetting what I was doing between stints, so there are many improvements that could be made, and several features already halfway developed. It could do with an icon and some in-app help, too. I’m still working on this, so if you have any ideas for it, I’m all ears.