Posts Tagged video

Seddit 1.5 supports multilingual Reddit listening. Also, Joey sang my half-baked PSOLA song!

Posted by Angela Brett in My Software on October 9, 2025

A while ago I added the possibility to configure Seddit (my text-to-speech-focused hands-free Reddit client for macOS and iOS) with multiple voices so that each user’s content could be read in a different voice. Of course, iOS and macOS come with voices that speak a huge variety of different languages, so you could theoretically select, say, a Japanese voice, a French voice, and three English voices, and download Reddit posts and comments in all of those languages. However, until now, Seddit would randomly assign a voice to each user, without regard for the language that user had written in, so if you did that, you could end up with English posts pronounced as if they were French, Japanese character names read out in English, and so on.

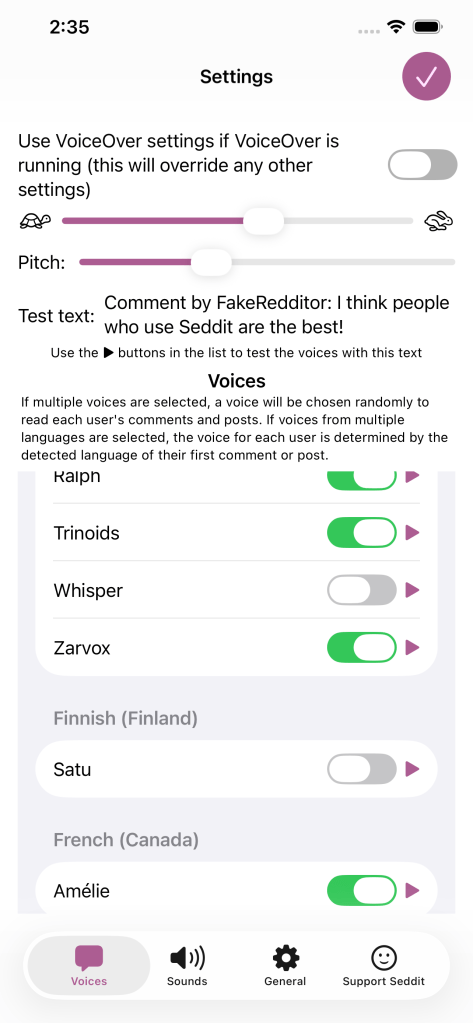

In the latest version, if you select voices that speak multiple languages in the Voices tab of the Settings screen, when Seddit encounters a post or comment by a user it hasn’t chosen a voice for yet, it will detect which of those languages the post or comment is probably in, and choose a voice that knows how to pronounce that language.

Of course, this isn’t perfect — it still always uses the same voice for each user, so if a user sometimes posts in French, and sometimes in English, or if they write in multiple languages within a single post because it’s a language-learning subreddit, then some of that is going to be spoken using an inappropriate voice. Also, if someone only writes in English but the first comment that Seddit encounters of theirs is an image meme and the text ‘c’est la vie!’ Seddit might determine that the user speaks French, and then hilariously mispronounce the rest of their posts. Note, if there is not enough text in the user’s first post for Seddit to even guess the language, it will not definitively choose a voice for that user until it encounters another post by them. I have yet to find either of these situations in practice, even while looking for them, so I hope it’s a rare issue.

Nonetheless, all of these situations are better than Seddit just randomly picking a voice for each user, regardless of which language they happen to be writing in. You should try it out, especially if you want to listen to Reddit content in various languages!

I also redesigned the Settings screen on iOS and iPadOS so it’s fullscreen and has a close button in the top right, as per Apple’s human interface guidelines, instead of a ‘Done’ button taking up a lot of space at the bottom and making the tabs look weird.

Note, while writing this post, I tested the regular ‘Start Speaking’ menu command on macOS and the ‘Speak’ command on iOS and found that it will sometimes switch to appropriate voices if I select multilingual text, even if my System Speech Language est réglé sur えい語。 Okay, it doesn’t work well for the French/English parts of that sentence. Maybe it’s only good with switching between languages if I switch scripts, e.g. בַּרְוָזָן утконос カモノハシ. Yep, that works, although if I select any other text along with πλατύπους, it’ll read it as ‘Greek small letter pi’ etc. I guess Greek letters are used too often in English for the speech engine to assume we actually switched to Greek. There were certainly plenty of Greek letters in the Princeton Companion to Mathematics.

Anyhow, I’m thinking I could improve Seddit further by giving each user a voice in each language you’ve selected voices for, and detecting the language for each post/comment, or for each sentence. Though macOS doesn’t do that unless you switch scripts… when I tried adding ‘J’imagine qu’il choisit une nouvelle langue pour chaque phrase.’ as a separate sentence and selected it along with a few English sentences, it read the whole thing in a French voice.

On the subject of interesting text-to-speech behaviour, and interesting behaviour in general, remember my half-written Lola parody about Pitch Synchronous Overlap and Add? Well, the lovely Joey Marianer had an appointment in town a while ago, and sneakily recorded the song in a parking building as a surprise, because I’m usually home so there’s little chance to record things at home without my hearing. I was duly surprised and delighted. Even the disclaimer about the missing bridge sounds like it scans as a bridge! Now you can also be surprised, delighted, and probably confused as to why this half-baked song was considered worth singing.

James Webb Space Telescope (now actually sung) and Seddit 1.4

Posted by Angela Brett in My Software, video on September 26, 2025

In my last post I gave lyrics to a parody of an Arrogant Worms song about the James Webb Space Telescope, and an update to my text-to-speech focussed Reddit client Seddit. I also said two things that turned out to be false:

- Joey and I will probably sing this parody, but it will take more mixing and video editing than our usual songs.

- This completes all the major features I have planned the app — I have other ideas for improvement, but I don’t think they’re essential. I’m hoping that the next update will be simply to remove the text saying I’m looking for a job.

Well, the other night Joey asked if I wanted to sing the song, and I said, “Okay! I should change into a more space-related shirt first” and then Joey produced two James Webb Space Telescope T-shirts out of nowhere, having secretly ordered them previously. So we changed into the shirts, and then we sang it, directly into a camera together, with no warmup or practice, and Joey trimmed the ends and put the video on YouTube. I had thought we’d sing our separate parts, get them perfect, then mix them, and make a video with some relevant educational images. Instead, here’s an imperfect but pretty good recording already!

I know where I made a mistake, but I’m not going to hang a lampshade on it so you’ll notice.

As for Seddit, well, not only did I not get the job I was hoping for when I wrote that, I also decided to update the app to use the new Liquid Glass design language that came out with iOS and macOS 26. I found and fixed a few other issues along the way. Here are the changes in Seddit 1.4:

- Features

- Added support for liquid glass appearance in iOS/macOS 26

- Moved playback controls to a liquid glass overlay so you can see more content around the edges

- Bug fixes

- Made sure compliments purchased on the Support Seddit screen are always shown in the same order

- Made the Voices Settings screen on macOS show which voices are Enhanced or Premium (I also filed bug FB20362911 with Apple about this, because there’s some system behaviour that’s inconsistent between iOS and macOS)

- Fixed an issue introduced in Seddit 1.2 whereby posts whose comments are not all read would be shown as read instead of partly read

You can get the latest version for Mac, iPhone, or iPad on the relevant App Store.

On the subject of songs and liquid glass, check out this song by James Dempsey about liquid glass:

Thanks to Seattle Xcoders, I was lucky enough to have seen the live debut of this, and another performance of it, which I recorded but don’t have permission to share yet.

I haven’t actually had any legibility issues with liquid glass though — and if I did, I know I could always turn on Reduce Transparency.

So I leave my bags behind (Galilee Song parody, now actually sung!) and another new version of Seddit

Posted by Angela Brett in My Software, video on September 2, 2025

Hey look, Joey Marianer sang the parody song lyrics from my last post! Check there for the lyrics and the aviation incidents referenced.

There are some more song parody lyrics, but first, a word from my sponsor: me. Just like last time, I’ve released a new version of Seddit, my text-to-speech-focussed Reddit client for macOS and iOS. This has a feature I’ve wanted to add for a while — the possibility to select multiple voices, and read each user’s posts and comments in a different one. The variety makes it easier to keep paying attention when listening for a long time, and having each user consistently use the same voice should make it easier to follow conversations.

I made some other changes in this version too. Here’s a full list of them:

Features

- Added the possibility to have each user’s posts and comments spoken in a different voice

- Added settings for whether to read out the subreddit name, and date and time for each post.

- Added the option to load no comments — this was for Joey, who wanted to try listening to short story subreddits while obeying the “don’t read the comments” rule of the internet.

Bug fixes

- Fixed a bug whereby turning off the ‘Say “Link” instead of reading out URLs’ setting would not work

- Fixed a bug where comments that weren’t loaded would be read as “comment by unknown user” Comments that aren’t loaded due to the comment depth settings are also no longer displayed.

- Fixed a potential crash when opening the app if posts had been deleted on another device

On the subject of text-to-speech, nine or ten years ago I read a book and a bunch of papers on speech synthesis in order to write a term paper for my Web Development for Linguistics degree. The term paper was longer than the text of my thesis, because my thesis also included source code for a web site and a Mac app. Anyway, from this book I learnt about PSOLA (Pitch Synchronous Overlap and Add) which is used to change the pitch and duration of sounds for text-to-speech, as one might do to change prosody, or create a robot choir.

Newer voices don’t use PSOLA so much, as (to put it simply) they have more samples of actual speech in different situations, so they don’t need to modify samples for the sake of prosody. Note, this is ‘newer voices’ as of a decade or two ago; I don’t know whether the latest crop of ML-based voices do things the same way. Anyway, I assume this is why the newer macOS voices don’t support the TUNE format I used for my robot choir.

At the time, I wrote an utterly silly partial parody of Lola, by The Kinks, about PSOLA. I thought maybe I’d finish it or maybe even make it less silly[why?], but I never did, and now I don’t remember enough about how PSOLA works to fully understand what I originally wrote. So here is that draft. It really doesn’t scan, but I hope it doesn’t scan in amusing ways:

I was trying to synthesise some prosody,

but my source and filter were mixed up just like granola

G-R-A-N-O-L-A, granola.

So I found a new way to make it sound rad

It’s called pitch-synchronous overlap and add, that is PSOLA

P-S-O-L-A PSOLA. Pso-pso-pso-P-SOLA.

Well I didn’t want to sound like a smallpox blight

So I really took care with my to get my epochs right

for PSOLA. Pso-pso-pso-P-SOLA.

If you’re not dumb then you’ll soon understand

How I speak like a woman then sound like a man

It’s P-SOLA. Pso-pso-pso-P-SOLA. Pso-pso-pso-P-SOLA.

[It doesn’t look like I wrote anything for the bridge (is that a bridge?) of the song, so just pretend it keeps going roughly like before]

It was used to make synthesized speech sound natural

But now there’s some super-sized features that fill that role-uh

R-O-L-E hyphen U-H role-uh

So that’s my guess if you’re wondering why r-

ecent voices don’t sing in my robot choir:

No PSOLA.

Sailing off into the sunset toward America

Posted by Angela Brett in Moving to the USA, video on July 27, 2025

As mentioned previously, I have an idea for a music video I’d like to make about my move to the US. But before I make that, I wanted to publish some of the video I took on the trip, in a fairly raw and unedited way, just to get it out there. I already published hours of 4K video from the ship leaving Hamburg, leaving Southampton, and arriving in New York City, recorded with my Sony ZV1 camera on a tripod.

Well, it was time to put together whatever random video I took with my iPhone. And I was just going to stick it all in a video with fades between clips, but there really wasn’t much going on in terms of sound — it needed music. And of course if there was going to be music, I’d better edit the footage a bit more to fit in with the music. So I ended up making something of an impromptu music video. Probably the coolest part (other than the music) is the sunset I recorded from the front of the ship one evening.

The song is ‘America’ by K’s Choice, as covered by my friend Joseph Camann when I requested it on his Patreon. Joseph is a multifaceted and multitalented individual who is also known as Chromatic Verse (mostly for visual art) CamannWordsmith (mostly for writing) and Joseph and the Bear Hat (for song covers.) It is unclear which parts the bear hat played in this cover.

I initially thought that the song ‘America’ would work better for the road trip across America than the trip across the pond, so I spent some time trying to find something else for this one… but come on, ‘America’ has a line about the sun rising and falling, and most of the video is a sunset. How could I not? Also there’s the double bonus of publicising both my friend Joseph and also one of my favourite bands, K’s Choice.

A day or so after we got to NYC, we visited MoMath, and I recently realised that while I’d put up video of Joey Marianer riding a square-wheeled tricycle there, I had forgotten to edit the other video I took of Joey at MoMath. Here’s Joey changing some benches from a triangle shape to a square and back, set to one of the free jingles that comes with Final Cut Pro:

That’s it for now. Stay tuned for a video of whatever I recorded on my phone during our ensuing road trip across the US, which I will inevitably spend more than the expected amount of effort on!

Some videos of my favourite rockstar developers

Posted by Angela Brett in News on July 14, 2025

Having released new versions of Lifetiler, I’m back to making a lot of progress on another app, which I hope to tell you about soon. But I don’t want to give you the impression that all I do these days is code… I also watch and record concerts of songs about code!

First of all, here’s the legendary James Dempsey performing some Swift-related songs at Deep Dish Swift, including a new one about sources of truth in SwiftUI (and elsewhere).

Here it is as a playlist of individual songs. I first heard of James Dempsey back in 2003 when the Model View Controller song he sang at WWDC leaked onto the internet. I then saw him debut Modelin’ Man in person at WWDC 2004. I believe I suggested to him at the time that he should release an album, and was excited when he released Backtrace in 2014.

When I heard he’d be playing at Deep Dish Swift 2025, that played a big part in getting me to sign up — I was hesitant as the hotel and flights were quite an expense for someone who didn’t have a job yet, though the conference was a tremendous networking opportunity. I got to meet many people in person that I’d previously only seen at iOS Dev Happy Hour, or maybe met once in-person at Core Coffee. Although I don’t know what my employment situation will be, I’ve already registered for Deep Dish Swift 2026.

When I heard James would be doing another pilot run of his App Performance and Instruments Virtuoso course, I signed up immediately. I had confirmation I’d registered within 16 minutes of getting the notification that it was happening. I’ve now completed the course, and the new version of Lifetiler is more performant because of it. Incidentally, I think I first heard the word ‘performant’ in French, and I still feel weird about using it in English. It just doesn’t feel like an English word.

Anyway, being a fan of James Dempsey is like waiting for a bus. You don’t see him for 20 years, and then two shows come along at once. Last month he performed at a Seattle Xcoders event, at a retirement party which the retiree was unfortunately unable to attend. I recorded his performance there too! This time there were two new songs — one about Liquid Glass, and another inspired by the recent passing of Bill Atkinson. I very much appreciated the latter, since I got my start in macOS development on HyperCard.

Here’s that one as a playlist of individual songs. I hear that James will be performing in Seattle again next month. I guess this is just a perk of living in the US. Living in the US is like waiting for a bus… you don’t see a single bus in years, but then three James Dempsey concerts show up at once.

And now for something completely different! Jonathan Coulton started this year’s JoCo Cruise with diarrhoea, and isolated for the first three days. When he eventually got back on stage, there were many jokes about his situation. I happened to remember that in 2015, he had joked that he was weirdly looking forward to the first ‘JoCo Poop Cruise’. He meant a cruise where everybody gets norovirus, but instead, this year, JoCo got his own personal Poop Cruise. While processing all my videos of the cruise, I kept clips of all the poop jokes so I could edit them together with that ill-fated wish, into this:

That’s all from me! I’m still writing my own apps, and still looking for a day job. While working on my next app (a text-to-speech-focused Reddit client), I’ve learnt about Swift Concurrency, SwiftData, CloudKit, AirPlay, and Media Player. It’s a lot of fun, especially being at the point of the project where there are so many important improvements I can make each day — and when I have one very excited TestFlight user giving feedback. But it would also be fun to have a day job with a salary, so if you know of anyone who’d be interested in hiring someone like me, put us in contact.

This is when the [poster] wall comes down

Posted by Angela Brett in Moving to the USA on November 11, 2024

Here’s a video in which I take down my posters in my apartment in Vienna, in order to pack them up to move to the USA. It includes improvised song parodies and silly jokes from Joey Marianer and myself.

I took this video partly to have a record of my poster wall (though I also have a photo of it which I sometimes use as a Zoom background) and partly because if I get permission to do so, I’ll make a music video of Sam Bettens’ song ‘Go’ documenting the entire move, and a sped-up version of this video will be used for the lyric ‘this is when the wall comes down’. For now I’ve just used that one clip of the song, since I suppose it’s short enough to be fair use. Other songs referenced in the video are:

- Guy Williams’ National Anthem of Libya from Taskmaster New Zealand

- Another Lobster, by Kornflake (which is a parody of Another Postcard, by Barenaked Ladies, but I realised when watching that yesterday that I hadn’t actually heard it before, and only knew of it via Another Lobster)

- Another Brick in the Wall by Pink Floyd

- Want You Gone by Jonathan Coulton, as sung by Ellen McLain on the Portal 2 soundtrack (it just seemed appropriate to end with ‘gone’, since I was starting with ‘Go’)

My posters are still in a crate on a truck somewhere between Montréal and here, so my home office currently only has a map of the route we took on the Queen Mary 2, and a Dogcow print, which I had wanted for a while but wasn’t prepared to pay the shipping and import fees for while I was living in Austria.

Aside from the music video, I have several other videos about moving here that I still need to edit, including:

- several hours of 4K footage from the Queen Mary 2 as it left Hamburg

- several more hours of 4K footage from the Queen Mary 2 as it arrived in New York.

- at least one more clip from MoMath

- various clips taken on my phone during our road trip from New York to my new home in the Seattle area

So watch this space! (I’m adding links as I upload the videos mentioned) Or subscribe to my YouTube channel and watch that space instead.

I’ll also put more photos from the trip on Flickr, so that’s another space you can watch. For now I’ve only put up panoramas from our pre-move trip to Fügen, our view of New York from the Queen Mary 2, and our road trip from NYC to Seattle.

In other news, Joey and I once again went to the MathsJam Annual Gathering in the UK. We didn’t give any talks, participate in the bake-off, enter a competition in the competition competition, or write any new parody songs for the MathsJam Jam this time. I won one of the competition competition competitions by writing the joke ‘What do you get if a Platonic solid loses a duel with its dual’ for the pre-determined punchline ‘the phantom of the solid’, but even that was just based on a poem I wrote (and performed) previously. We did, however, participate in Taskmathster as one of the Saturday evening activities, then two days later in London, we did the Taskmaster Live Experience. Both were a lot of fun!

Also, I have just released a new Mac app! It’s the one I made to create charts of days Joey and I have spent together while living apart (as seen in my previous post about moving to the USA). I’ll post more about it later today, but I think it needs its own post.

Dumb Parody Ideas at FuMPFest 2024

Posted by Angela Brett in News on October 9, 2024

FuMPFest is a funny music festival put on by the Funny Music Project, which I had never attended in-person because it’s not worth travelling from Austria to the USA for just a weekend. But now that I live in the USA, I finally got to go! It was put on as part of Con on the Cob, and was quite similar to the comedy music track at MarsCon (also run by people from The FuMP), which I have been to a few times when it happened to be a week out from JoCo Cruise. Both are approximately the same friendly group of comedy musicians and comedy music fans, having a small comedy music festival while surrounded by a larger convention.

One thing that happens at FuMPFest is the Dumb Parody Ideas contest, where people sing a few lines (up to 90 seconds per idea) of dubious song parodies. I had a few ideas for this years ago (I have a note with the lyrics from 2021), but never had a chance to enter… until now! The first one is a parody of Losing My Religion, by REM, inspired by the six and a half years of regular FaceTime calls with Joey while we were still living on separate continents:

Lyrics:

That’s me in the corner.

That’s me in the FaceTime, losing my connection.

The background is a screenshot I took while losing my connection in a real FaceTime call with Joey. The me in the corner was added in post, a little larger than the actual size of the inset which would have me in it. Joey’s playing ukulele offscreen.

The other parody idea I had was of Enya’s ‘Only Time’, which (like most things), Joey sings better than I could. We recorded a video of it before FuMPFest, because the only Dumb Parody Ideas panel I’d seen was at an online-only version of the con in 2020, so I wasn’t sure whether people would be doing them live for this one. The first take was pretty hilariously bad, setting us up to laugh through some of the later takes, so here’s the video with out-takes.

Lyrics:

Who can say where the road goes?

Where the day flows?

Google Maps.

In the end Joey did perform it live, followed by another dumb parody idea that Joey came up with on the day. A few hours before this panel, Devo Spice showed a short horror film which featured the song (of anonymous authorship) ‘I Sh💩t More in the Summer’. Joey parodied it with the things we do more at FuMPFest, taking inspiration from the FuMPFest bingo cards we were given.

Lyrics:

We chant COG! more at FuMPFest

than we do at any other time of year.

We yell ‘moisture!’ [more] at FuMPFest

than we do at any other time of year.

Eat from a food truck

“Corned beef and Cabbage”

Tune a guitar on stage

We all stall more at FuMPFest

than we do at any other… …stalling for time of year!

Both Losing My Connection and Google Maps were finalists in the competition, though we didn’t win the coveted golden spatula. Surprisingly, Joey’s last-minute parody was not nominated, despite the more developed lyrics and clear pandering to that specific audience.

Overall, we had a great time at FuMPFest. It was all streamed live on The FuMP’s Twitch channel, and at least for now, there are archives of the shows available there.

The next big thing on my calendar, which also includes song parodies and can be attended virtually, is the MathsJam Annual Gathering.

As promised in my last post, I put my new macOS app on TestFlight, and have already fixed some issues that were pointed out. It’s the app that made the chart of days that Joey and I have been together in person. It could be used to chart anything where you can summarise each day with a few colours or emoji — long-distance relationships, travel, moods, daily progress towards goals, the timeline of a novel you’re writing, weather, etc. If you’re interested in trying it, let me know somehow and I’ll add you to the list of testers. Otherwise, watch this space and get it when I release it some day soon.

When I’m not going to conventions, working on apps, and trying to convince various internet companies that I live here, I am still looking for a day job. Let me know if you know anyone who would like to hire me.

I swam halfway across the Atlantic Ocean, and now I live in the USA

Posted by Angela Brett in Moving to the USA on September 19, 2024

I keep thinking I shouldn’t post here until I’ve processed more of my photos and videos, updated my apps so I’ll look better for potential future employers, etc, but if I did that, the post would be absurdly long and late. This situation is of course fractal — I keep thinking I shouldn’t release my apps until I’ve fixed all those bugs, got everything working in VoiceOver, etc., but if I did that (as I have, many times in the past) the apps would simply never get released.

So, today I’ll write a blog post, and tomorrow I’ll either put my new macOS app on TestFlight, or submit new versions of NastyWriter and NiceWriter (which don’t work very well in the latest iOS, and also will fail to show ads because I’m halfway through moving my ad accounts to a new country.) Bug me if I don’t, and let me know if you want to test the apps. I should decide which one to release already so that I can focus on one thing at a time, but right now I’m focussing on writing this blog post, not on deciding which apps I can realistically get ready. See what I did there?

First things third: I made it to my new home in the Seattle area! I’m busy se(a)ttling in, changing all my online accounts to the new country (I’ll write a separate post about which hoops have to be jumped through for which accounts), and casually looking for work.

My lovely husband Joey Marianer came to Vienna to help with the moving, and many of my friends helped by taking my furniture and other things I didn’t need to keep. Then Joey and I took a train to Hamburg, the Queen Mary 2 ocean liner to New York City, and a road trip to our home in the Seattle area. It was of course in a pool on the Queen Mary 2 that I swam while we were halfway across the ocean.

Here’s a chart of all the days Joey and I have known each other, with appropriate emojis for the days we were together in person, and black squares for the days we weren’t. It was made by the aforementioned new app, which I started writing in an airport lounge one time when Joey’s flight left several hours before mine. This chart ends on September 6, 2024, because that way it would make a nice 49×56 rectangle. It also shows our first 256 days together, which is a nice round number in binary.

I’d never been to New York City before, so (after the no-longer-obligatory trip to Ellis Island the day I immigrated) we stayed for a few days before continuing. High on our list was visiting MoMath, the National Museum of Mathematics. I have a few videos from that, but here’s the one I’ve uploaded, of Joey riding a square-wheeled tricycle on a circular track made of inverted catenary curves:

Also in New York, we visited Liberty Island, Central Park, the Oculus, a few Sabrett’s hot dog stands, an annex of the Transit Museum, and the Apple Store on 5th avenue. I tried a rainbow bagel, some poorly-configured hot dogs (a friend from New York had recommended a particular combination of toppings, which neither stand gave us), and an Apple Vision Pro — I’d tried a friend’s one before, but without corrective lenses.

On the way home we stopped to meet friends in Toledo, Chicago, and Minneapolis. We visited Tony Packo’s (which has a large collection of autographed hot dog buns), Portillo’s (which has trays with deep recesses for cups, making it much easier to carry drinks around), American Science and Surplus (which has amusing signs and muzak) and the Minnesota State Fair (which has food on a stick).

Here’s a playlist of videos and podcasts that Joey and I showed each other because of things we saw during the trip:

There’s obviously a lot more to say and show about all of this, but I have to go finish some apps, so here’s a picture of the Statue of Liberty that I took from the Queen Mary 2 (previously posted on X and mathstodon).

My year is only going to get weirder, so I’d better fill you in.

Posted by Angela Brett in News on August 2, 2024

A lot has happened so far this year, and a lot more is about to happen. I have pics, so it did happen. TL; DR: I saw K’s Choice again, went to CERN again, went on the JoCo Cruise again, and will be moving to the USA in August. Just read the blue ‘Visa news’ section and the August part if you want to know how the US visa process went.

January: K’s Choice in Gent

🇺🇸Visa news

In mid-January, I got an email that began ‘Thank you for being a valued U.S. Consular Electronic Application Center (CEAC) customer.’ indicating that I’d finally got past step 1 of the 12-step program for me to quit Austria and go move in with the lovely Joey Marianer in the USA. Thus began a new phase of filling in forms and collecting documents.

On January 26, I went to Gent to see K’s Choice, one of the bands I have seen a fair bit at various places in Europe. I hadn’t them them since they were last in Vienna in 2017, so I missed them. A friend I know from JoCo fandom had suggested we go together. Unfortunately, she was too sick to go, but I did reconnect with someone I met at my very first K’s Choice concert, in Hamont-Achel in 2009.

Sam Bettens, the lead singer of K’s Choice, recently published a book which described (among many other things) his experience of moving to the USA to be with a spouse, as I am about to do. Even though the marriage didn’t work out, living in the USA did, so that was comforting to read about, as I mentioned to him after the show. I had too many things I wanted to say in a short time, and was a little flustered, so I forgot to ask for permission to post videos of the show, but they’ve always given it in the past, so here you go:

February: CERN Third Collisions

🇺🇸Visa news

In early February, I was notified that my Immigrant Visa Case had become Documentarily Qualified, meaning I’d completed step 9 of the process and just had to wait for my interview to be scheduled.

From 9–11 February, CERN had an event called Third Collisions, where CERN alumni could socialise, see some talks, and visit the new Science Gateway exhibits and the LHC experiments underground. I visited ALICE, because it was the only one of the four main detectors that I wasn’t 100% sure I’d seen before.

I haven’t put too much from the CERN visit online yet, but here’s a playlist where I will add videos:

Among other things, the Science Gateway had this tactile model of a particle detector, with an audio description to guide the visitor in feeling around the different parts of it. The various parts of the detector were differentiated with high-contrast colours and textures. Before visiting the Science Gateway, I happened to talk to someone who was involved in developing this; they had many ideas and prototypes for ways to explain particle detectors to blind and low-vision folk, and a lot of feedback from such people, and this is the only finished product that ended up in the exhibit.

Ironically, since I’m editing this post on my iPad, I can’t figure how to add alt text to this picture, but I think the audio description does a better job than I would have.

When I arrived in Vienna ten years ago, I assumed that I would soon forget French to learn German, so I did the DALF C1 exam to have proof that I once knew French. When I arrived in Geneva and had a conversation with a stranger at a bus stop, I realised I neither forgot French nor learned German — it was still so much easier to communicate in French. I did not make the most of this opportunity to learn German. This is partially because of circumstances (having to stop in the middle of four different B1-level German courses for different reasons, spending most of a year in NZ, and another few years hardly going outside due to the pandemic) but mostly because I didn’t put as much effort in. Or at least I didn’t listen to as many podcasts or read as many books in the language. Oh well; my level of German might still impress people in America.

March: JoCo Cruise (and a little MarsCon)

I shouldn’t need to tell you what JoCo Cruise is again. It’s where I met Joey, and thus, ultimately the reason I’m moving to the States. Joey and I stayed in Minnesota for a bit before this year’s cruise, because the flights from there to the cruise were more convenient, and it also gave us a chance to hang out with people we know from the MarsCon Comedy Music Track. As is often the case, MarsCon was on the same weekend as the start of the cruise, so we didn’t get to go, but at least we got to see people who arrived early.

We also saw a Jonathan Coulton and Aimee Mann concert with some friends from MarsCon and the cruise. At the show they mentioned, but did not play, a song Aimee Mann wrote based on a ChatGPT-generated title of a typical Jonathan Coulton song, ‘The Ballad of Captain Quark’. As someone who’s quite interested in quarks, I mentioned to JoCo on the cruise that I’d like to see it, and Aimee sang it at the final concert. Simalot posted video from the red team show and b$ shot this video of it on my camera in the gold team show.

Here is a playlist of the 25 hours, 5 minutes, and 17 seconds of unique JoCo Cruise 2024 footage captured by my cameras (some filmed by Joey on my second camera while I was at other events, some filmed by b$ while I was isolating in the cabin.)

🇺🇸Visa news

On the second-to-last day of JoCo Cruise, while Joey and I were holed up in our cabin getting over whatever lurgy we had caught (Joey tested negative for COVID, but it was an antigen test that didn’t come with instructions, so who knows?) we heard that my immigrant visa interview had been scheduled, which is to say, we’d reached step 10 of the immigration process.

April: US Visa Issued

Okay, I don’t have a video of this, but unless you’re an immigration officer working at the Port of New York and New Jersey in August, you’re not the people I have to prove it to. I heard that my visa was granted almost exactly 24 hours after leaving the visa interview (where they’d already told me I met the requirements, so it was not an agonising wait.) I then picked up my passport with the visa in it during my lunch break the next day.

I have six months from the date of issue to enter the country, at which point they will validate the visa and it will be good for a year, during which time I will receive an actual green card in the mail which will be valid for longer.

I then gave notice at my job and my apartment and figured out a moving company. Joey flew to Vienna not long ago, to help me with moving and then take me home. I am not keeping any of my furniture, so if you’re in Vienna and want some tables, bookcases, or other kinds of shelving or storage, let me know. Most of it has been claimed by now, though.

August: Moving to the USA

Joey and I will take the Queen Mary 2 from Hamburg to New York City, because that seemed like a sufficiently ridiculous way for me to immigrate. In NYC we plan to at least visit Ellis Island (it seems appropriate to do that after immigrating by sea) MoMath, Central Park (mainly because I love the Apple TV+ show by that name), 826NYC, and maybe Club Cumming, if JoCo Cruise 2024 and 2025 guest Daphne Always will be performing there. If you have any other suggestions on what to see in NYC, let me know! We haven’t decided yet when or how we’ll get from there to Joey’s place in the Seattle area.

After that, we’ll likely go to FuMPFest at Con on the Cob in October and MathsJam Annual Gathering (back on this side of the pond) in November, but the future hasn’t been written yet!